Definition of Mutual Information

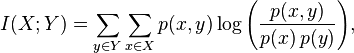

Formally, the mutual information of two discrete random variables X and Y can be defined as:

where p(x,y) is the joint probability distribution function of X and Y, and and are the marginal probability distribution functions of X and Y respectively.

In the case of continuous random variables, the summation is replaced by a definite double integral:

where p(x,y) is now the joint probability density function of X and Y, and and are the marginal probability density functions of X and Y respectively.

These definitions are ambiguous because the base of the log function is not specified. To disambiguate, the function I could be parameterized as I(X,Y,b) where b is the base. Alternatively, since the most common unit of measurement of mutual information is the bit, a base of 2 could be specified.

Intuitively, mutual information measures the information that X and Y share: it measures how much knowing one of these variables reduces uncertainty about the other. For example, if X and Y are independent, then knowing X does not give any information about Y and vice versa, so their mutual information is zero. At the other extreme, if X and Y are identical then all information conveyed by X is shared with Y: knowing X determines the value of Y and vice versa. As a result, in the case of identity the mutual information is the same as the uncertainty contained in Y (or X) alone, namely the entropy of Y (or X: clearly if X and Y are identical they have equal entropy).

Mutual information is a measure of the inherent dependence expressed in the joint distribution of X and Y relative to the joint distribution of X and Y under the assumption of independence. Mutual information therefore measures dependence in the following sense: I(X; Y) = 0 if and only if X and Y are independent random variables. This is easy to see in one direction: if X and Y are independent, then p(x,y) = p(x) p(y), and therefore:

Moreover, mutual information is nonnegative (i.e. I(X;Y) ≥ 0; see below) and symmetric (i.e. I(X;Y) = I(Y;X)).

Read more about this topic: Mutual Information

Famous quotes containing the words definition of, definition, mutual and/or information:

“Perhaps the best definition of progress would be the continuing efforts of men and women to narrow the gap between the convenience of the powers that be and the unwritten charter.”

—Nadine Gordimer (b. 1923)

“Although there is no universal agreement as to a definition of life, its biological manifestations are generally considered to be organization, metabolism, growth, irritability, adaptation, and reproduction.”

—The Columbia Encyclopedia, Fifth Edition, the first sentence of the article on “life” (based on wording in the First Edition, 1935)

“Louise Bryant: I’m sorry if you don’t believe in mutual independence and free love and respect.

Eugene O’Neill: Don’t give me a lot of parlor socialism that you learned in the village. If you were mine, I wouldn’t share you with anybody or anything. It would be just you and me. You’d be at the center of it all. You know it would feel a lot more like love than being left alone with your work.”

—Warren Beatty (b. 1937)

“I was brought up to believe that the only thing worth doing was to add to the sum of accurate information in the world.”

—Margaret Mead (1901–1978)